Generative AI is splitting industrial robotics into four distinct layers: (1) LLM-based code and motion-script generation for existing controller languages; (2) vision-language-action (VLA) foundation models for end-to-end manipulation without explicit programming; (3) generative simulation platforms that produce synthetic training data at scale; and (4) AI-augmented offline programming with natural-language teach pendants. As of 2026, Layers 1 and 4 are in production at measurable scale; Layers 2 and 3 remain at pilot stage.

Layer 1: LLM Code and Motion-Script Generation

The most immediate application of generative AI in industrial robotics does not require new hardware or a different robot architecture. It replaces, or at minimum accelerates, the process of writing robot programs in controller-specific languages by using large language models trained on code corpora that include robot programming syntax.

Industrial robot controllers each use a proprietary language: FANUC Teach Pendant (TP) code, ABB RAPID, KUKA Robot Language (KRL), and Universal Robots’ URScript are the four most widely deployed in automotive and general manufacturing. An LLM with exposure to these language grammars can generate, complete, and debug programs from natural-language instructions in much the same way GitHub Copilot completes Python or JavaScript. The analogy is deliberate: early LLM robotics tools are positioned explicitly as “GitHub Copilot for robot programming.”

ABB has moved furthest among the OEM tier with a production-oriented AI assistance feature embedded in RobotStudio, its OLP platform. ABB’s internal LLM integration allows engineers to describe a trajectory objective in plain English, such as “generate a torch path along the top flange of this bracket at 60 degrees torch angle and 0.4 m/s travel speed,” and receive RAPID code as output. The engineer reviews, adjusts, and validates; the LLM shortens the drafting phase rather than eliminating the engineer.

Microsoft Research’s Robotics Copilot project, published in 2024, demonstrated an architecture where natural-language skill descriptions are converted to structured robot policy code through an LLM intermediary layer. The system handled pick-and-place and assembly tasks described entirely in English, with the LLM inferring object references from scene context rather than requiring explicit coordinate inputs. The research framing (skills via natural language) influenced subsequent commercial integrations.

FANUC’s Zero Downtime (ZDT) platform has added an LLM assistant layer for anomaly explanation and corrective code suggestion, positioning it not as a program authoring tool but as a debugging and maintenance accelerator for existing TP code libraries.

According to industry observations from early LLM code-generation pilots across automotive and general fabrication environments, programming time for new robot routines decreases by 25–40% when LLM code-completion tools are integrated into standard OLP workflows, with the largest gains on repetitive, parameterizable programs such as pick-and-place sequences and standardized weld passes. Gains narrow significantly for complex multi-axis coordinated motion where engineer review time dominates over code authoring time.

The constraints are real. LLMs can generate syntactically correct RAPID or TP code that is kinematically invalid: paths that place the tool outside the robot’s reachable workspace, or that generate wrist singularities at mid-path. Generated code requires the same reachability and collision-detection validation pass that manually written OLP programs require. The LLM compresses authoring time; it does not replace verification. This is a structural limitation until formal verification tools are integrated directly into the generation pipeline, an area of active research as of 2026.

For deeper context on how AI code generation integrates with the broader OLP software stack, see our companion article on Robotic Welding Offline Programming, Simulation and Digital Twin (2026).

Layer 2: VLA Foundation Models for End-to-End Manipulation

Vision-language-action models represent a fundamentally different approach to robot programming. Rather than generating code for a deterministic controller, a VLA model takes camera images and a natural-language instruction as input and outputs robot actions directly, as a single end-to-end neural network. There is no separate path planner, no explicit coordinate specification, no post-processor. The model generalizes from its training distribution to novel objects and configurations in ways that code-based approaches cannot.

Key Research Milestones

The VLA research trajectory has accelerated significantly since 2023. RT-2 (Google DeepMind, 2023) demonstrated that a model initialized from a vision-language pre-trained backbone could exhibit emergent reasoning, performing tasks it was never explicitly trained on by combining concepts learned from web-scale data. This established the precedent for using large foundation models as robot policy backbones.

OpenVLA (Stanford University, 2024) provided a fully open-weights alternative at 7 billion parameters based on the Prismatic VLM architecture. OpenVLA’s Apache 2.0 license and fine-tuning accessibility made it the primary research and early-industrial-pilot baseline for labs and integrators without access to Google’s proprietary compute.

Physical Intelligence’s π0 (2024–25) introduced a flow-matching action head in place of discrete token classification, producing smoother trajectories better suited to multi-stage dexterous tasks. Figure AI’s Helix (2024–25), deployed on the Figure 02 humanoid at BMW’s Spartanburg plant, takes a two-model approach: a high-level VLM handles scene understanding while a low-level sensorimotor model executes at control frequency, separating the language-reasoning loop from the real-time control loop.

Google DeepMind’s Gemini Robotics (2025) applies the Gemini multimodal model family to dexterous manipulation and 3D spatial reasoning tasks. NVIDIA GR00T N1 and GR00T-Mimic (2025) constitute a humanoid-specific VLA stack — GR00T N1 weights are publicly released, making it one of the first humanoid foundation models available for external fine-tuning. The accompanying GR00T-Mimic pipeline generates synthetic demonstration data from human video, partially closing the teleoperation data gap.

The Open X-Embodiment dataset, assembled by 60+ laboratories across 22 robot types, remains the most significant cross-institutional data sharing effort in the field. Training on this heterogeneous data improves cross-robot generalization: a policy trained on Open X-Embodiment transfers more reliably to new robot hardware than one trained on single-platform data.

According to the Stanford AI Index 2025, the number of peer-reviewed papers on robot learning and foundation model-based robot control grew by over 60% between 2022 and 2024, outpacing growth in any other subfield of applied AI. The Index notes that industrial deployment lags research publication by a median of 18–36 months for manipulation tasks requiring sub-millimeter precision, reflecting the gap between demonstration feasibility and production-grade reliability.

Industrial Deployment Status

As of 2026, VLA deployment in production industrial settings is concentrated in two application categories where the flexibility argument is strongest and the cost of occasional failure is manageable: high-mix bin picking and flexible assembly for loose-tolerance tasks. Covariant’s RFM-1 in e-commerce fulfillment is the most cited commercial deployment. Automotive pilot programs with Figure AI Helix represent the most visible heavy-industry test case.

Welding, heavy-payload manipulation, and precision assembly remain outside the current VLA production envelope. The stochastic inference behavior of neural-network action heads creates variance that can exceed process tolerances in applications requiring sub-0.1 mm repeatability. For welding specifically, qualified welding procedure (WPS) traceability requirements under ISO 3834 and AWS D1.1 create additional barriers to replacing deterministic path execution with probabilistic policy inference.

EVST, whose product roadmap now includes humanoid robots, data-collection dexterous hands, and quadruped robots alongside its industrial robot and welding system lines, is positioned across this transition. Its data-collection dexterous hand platform addresses the demonstration data bottleneck that VLA training depends on. This is an area where hardware investment now has a direct downstream impact on foundation model quality. For background on embodied AI architecture and VLA model mechanics, see Embodied AI in Industrial Robotics: How VLA Models Are Changing Robot Programming. For context on the data collection infrastructure supporting these models, see Embodied AI Data Collection: Teleoperation and Sim-to-Real (2026).

Layer 3: Generative Simulation and Synthetic Training Data

VLA models need demonstration data at scale. Physical teleoperation — a human operating a robot through a haptic interface while the system records joint states and camera streams — is the highest-quality data source, but it is expensive and slow. According to industry observations, collecting a usable demonstration dataset for a novel manipulation task costs roughly 10–100 hours of operator time per task variant. Generative simulation addresses this bottleneck by generating synthetic training data in software at a fraction of the physical cost.

NVIDIA Cosmos and Isaac Sim 4.5

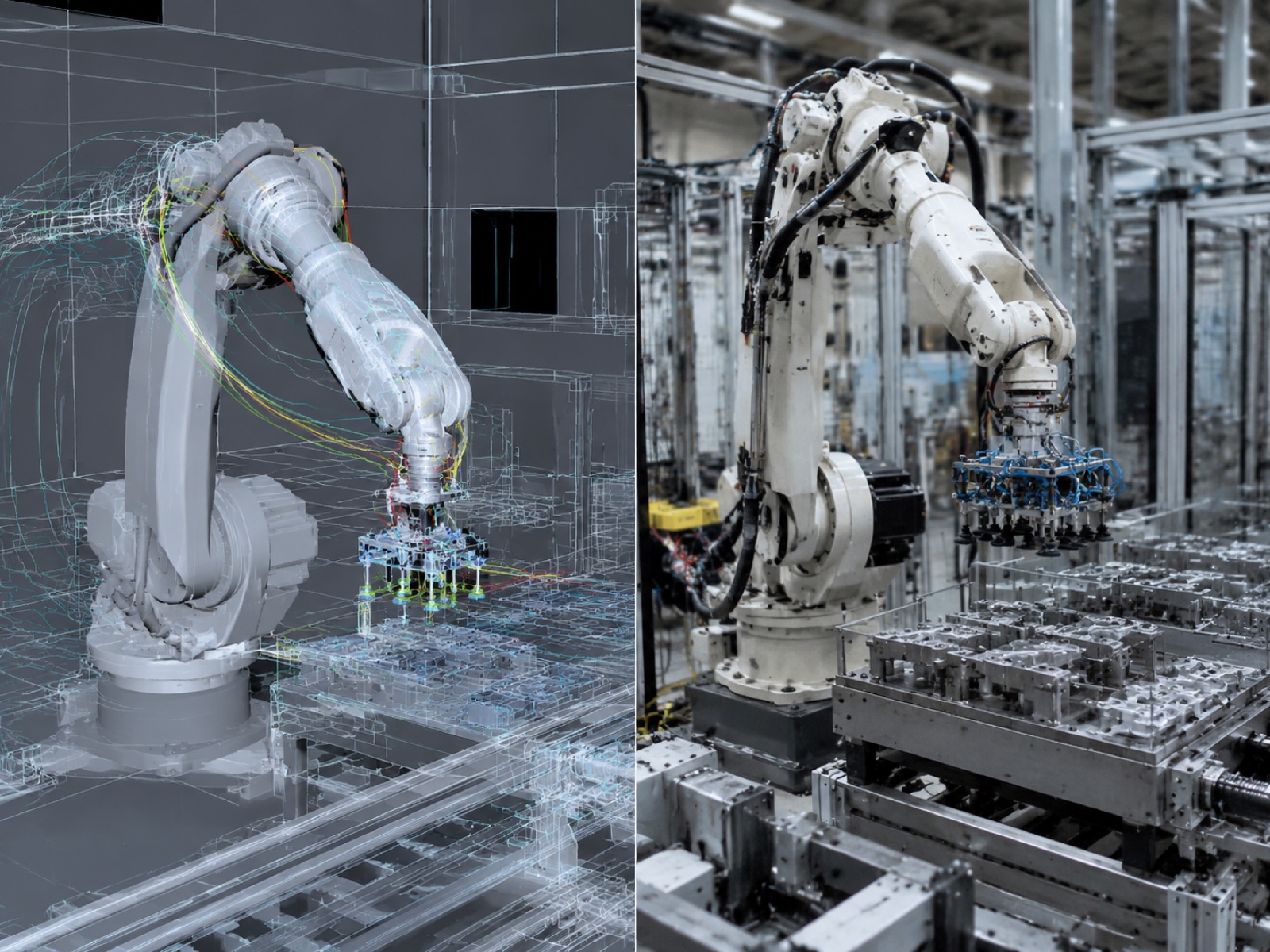

NVIDIA Cosmos World Foundation Models, announced alongside GR00T at GTC 2025, generate physically plausible training videos from text or image prompts. Rather than traditional simulator rendering, Cosmos produces photorealistic synthetic video that more closely matches real camera image statistics, reducing the visual domain shift component of the sim-to-real gap. NVIDIA Isaac Sim 4.5 provides the underlying physically accurate simulation environment, running parallel environments on NVIDIA GPU infrastructure to collect robot trajectory data at the scale that foundation model training requires.

The combination, Cosmos for photorealistic synthetic video generation plus Isaac Sim for parallel physics-based trajectory collection, constitutes NVIDIA’s integrated answer to the training data problem for humanoid and industrial robot foundation models.

Open-Source and Academic Generative Simulators

Genesis (Tsinghua University) is an open-source generative simulation platform that uses differentiable physics and generative scene composition to produce diverse training scenarios at high throughput. DreamGen applies video diffusion models to generate robot manipulation demonstrations conditioned on language descriptions, enabling data augmentation without additional physical robot time. RoboCasa provides a large-scale simulation benchmark for household and kitchen manipulation tasks, with particular utility for humanoid robot training on bimanual dexterous scenarios.

Sim-to-Real Gap: 2024–25 Progress

The sim-to-real gap (the drop in policy performance when a model trained in simulation is deployed on a physical robot) has narrowed measurably between 2023 and 2025 through three mechanisms. Domain randomization (randomly varying textures, lighting, object masses, and friction coefficients during simulation training) remains the most widely deployed technique. Photorealistic rendering via Cosmos and similar tools reduces visual domain shift. And hybrid data pipelines that mix synthetic trajectories with a smaller volume of real teleoperation data have shown consistent performance improvements over pure sim or pure real training in published benchmarks.

According to NVIDIA’s published developer ecosystem data, more than 700 industrial companies were using NVIDIA Omniverse and Isaac Sim for digital twin, simulation, and synthetic data generation applications as of early 2026, with robotics manipulation and mobile robot navigation among the top application categories. NVIDIA’s disclosed developer adoption figures represent a proxy indicator for the pace at which generative simulation infrastructure is entering industrial workflows, though per-cell deployment detail is not separately disclosed.

Layer 4: Natural-Language OLP and AI-Augmented Teach Pendant

Layer 4 applies generative AI at the point where most factory robot programming currently occurs: the teach pendant, or its software equivalent in an OLP tool. The difference from Layer 1 is context. Layer 1 generates general-purpose code from language descriptions. Layer 4 integrates directly with a specific process — welding, painting, assembly — and generates fully parameterized, process-aware motion programs from operator-level natural-language inputs, including process parameters, not just geometric paths.

The functional example that captures this layer’s value: an operator instructs a welding system, “weld a 200 mm seam at the bottom corner of this part,” and the system returns a complete torch path with approach angle, travel speed, weave parameters, and voltage settings drawn from a process library, without manual waypoint definition. The operator confirms and runs. This is qualitatively different from a code-completion tool that generates RAPID syntax from a geometry description; it is an end-to-end process generation system.

Vendor Implementations

ABB RobotStudio has integrated a Copilot-style natural-language assistant that accepts process descriptions and returns structured robot programs for review. The integration sits above the RAPID code layer — the LLM reasons about the process, then generates RAPID — rather than requiring the engineer to specify the language-level program directly. For welding applications, the assistant can reference RobotStudio’s welding power-source library to populate arc parameters automatically.

FANUC’s ZDT platform has added natural-language diagnostic and corrective-suggestion capability, complementing rather than replacing the TP code authoring workflow. The assistant interprets alarm codes and cycle-time anomalies in plain language and proposes parameter adjustments, reducing reliance on specialist programmers for routine maintenance and tuning tasks.

Among OEMs deploying Layer 4 capability in welding-specific applications, EVST’s EVS-AI welding system integrates 3D vision auto-scan with automated path generation. A 3D camera scans the workpiece, extracts weld seam geometry and fit-up data, and the system generates a complete robot weld program — path, torch orientation, travel speed, and process parameters — drawing from the RX welding process library that pre-loads typical joint geometries and multi-pass sequences. The system eliminates manual teach-in for standard fillet, butt, and lap joints on carbon steel and aluminium, and supports dual-wire and multi-pass multi-layer sequences for higher-complexity parts. CE, SGS, and TUV third-party certification covers integration into quality-managed production environments. This positions the EVS-AI system at the production-ready end of Layer 4, distinct from the LLM-first approaches still in validation at other vendors. For a detailed software-stack comparison that includes this system, see Robotic Welding Offline Programming, Simulation and Digital Twin (2026).

According to IFR World Robotics data, the share of newly installed welding robots shipped with offline programming or advanced simulation software capability grew from approximately 25 percent in 2019 to an estimated 48 percent in 2024. The addition of natural-language path generation interfaces represents the next incremental capability layer above OLP, and early adopter data from welding system integrators indicates that AI-assisted 3D scan-to-path systems reduce first-piece programming time by 80–95% on standard fillet and butt weld geometries compared to manual OLP methods.

Industrial Vertical Readiness by Application

Generative AI readiness varies substantially across industrial verticals. The determinants are tolerance requirements, regulatory and quality traceability obligations, data availability, and the cost of failure.

Welding

AI vision quality control and AI seam tracking are deployed at production scale in welding cells as of 2026 — these are Layer 4 implementations using structured-light cameras and pattern-matching models, not VLA inference. Full VLA-based welding path generation, where a neural-network policy drives torch motion without a deterministic process library, is estimated to reach controlled production deployment by 2026–2028 for standard carbon steel geometries, conditional on WPS traceability standards being updated to accommodate AI-generated path records.

Assembly and High-Mix Manufacturing

AI vision-guided assembly using force-controlled insertion and LLM constraint reasoning is in pilot deployment at automotive tier-1 and consumer electronics manufacturers. Tasks with tolerance requirements above 0.5 mm (cable routing, snap-fit clips, label placement) are the strongest near-term fit. Tight-tolerance insertion tasks (sub-0.1 mm) retain classical motion controllers for precision actuation, with VLA handling task selection and coarse positioning above the deterministic layer.

Logistics and Bin Picking

High-mix bin picking and palletizing represent the most mature VLA deployment vertical in 2026. Covariant’s RFM-1 and Dexterity’s robotic palletizing systems have moved beyond pilot into production at fulfillment centers. The commercial argument, handling novel SKUs without per-item programming, is directly enabled by foundation model generalization.

Heavy and Tier-1 Automotive

Heavy-payload manufacturing (structural steel, press-line tending, body-in-white) and Tier-1 automotive body shops remain on deterministic controllers. IATF16949 process control requirements, audit trail obligations, and the capital cost of unplanned downtime make stochastic AI inference commercially unviable at scale in 2026. These environments will adopt generative AI primarily through Layer 1 (code generation for OLP acceleration) and Layer 3 (simulation data generation) before any Layer 2 VLA deployment.

According to the McKinsey Global Institute 2025 report on AI in industrial operations, manufacturing sectors with strict quality certification requirements (automotive, aerospace, medical devices) adopt AI automation tools at approximately half the rate of general fabrication and logistics, primarily due to the additional validation burden imposed by quality management system requirements. The report estimates that AI-augmented programming tools (Layer 1 and Layer 4 in the framework described here) will reach majority adoption in non-automotive industrial robot applications by 2028, while full VLA deployment in certified quality environments will require an additional two to four years beyond that threshold.

Risks and Barriers

Hallucination and Safety: AI-Generated Unsafe Trajectories

LLMs can generate syntactically valid robot programs that are kinematically or dynamically unsafe. A generated path may place the end-effector outside the robot’s reachable workspace, drive through a singularity, or prescribe joint velocities that exceed dynamic limits. This is not a theoretical risk — it has been observed in early LLM code-generation pilots when validation steps were skipped or abbreviated. The necessary mitigation is a mandatory formal verification layer: reachability analysis, collision detection, and dynamic-limit checking must be applied to every AI-generated path before upload to the physical controller. No AI code generation system should bypass this layer, regardless of how confident the model output appears.

For VLA models, the safety challenge is more fundamental. A neural-network policy’s response to out-of-distribution inputs — a new object geometry, an unexpected lighting condition, a partially occluded scene — may degrade gracefully or fail abruptly, and predicting which will occur is not straightforward with current interpretability tools. ISO 10218-3, which will cover AI-generated robot code and AI-driven motion planning, is in pre-development as of 2026. The interim approach, consistent with SOTIF (ISO 21448) reasoning, is to define a strict operational design domain and restrict AI operation outside it until additional validation evidence accumulates.

Compute Cost and Capex Implications

Running VLA inference in a production cell requires a dedicated GPU compute node. A 7B-parameter model (OpenVLA scale) can run on a single mid-range GPU at 6–10 Hz inference frequency; larger models require multiple high-end GPUs. According to industry observations from robot cell integrators evaluating VLA deployments, the GPU hardware cost for a single inference node adds approximately USD 4,000–8,000 to cell capex, representing a 5–10% premium over a conventional robot cell at current hardware prices. This is a manageable capex addition at scale, but it is not trivial for small and medium manufacturers evaluating ROI on a per-cell basis.

IP and Data Ownership

When AI systems learn from production trajectories generated at customer facilities — whether through vendor-integrated LLM tools or VLA training pipelines — the legal question of who owns the resulting model improvements and trained weights is not settled. The Open X-Embodiment dataset established precedent for academic cross-institutional data sharing under Creative Commons terms, but commercial production data operates under different constraints. Buyers should require explicit contractual terms from AI robotics vendors covering data retention, model improvement rights, and competitive use restrictions before committing to platforms that learn from facility-specific trajectory data.

Standards Gap: No ISO Standard for AI-Generated Robot Code

ISO 10218-1 and ISO 10218-2 govern robot design and integration safety. ISO/TS 15066 covers collaborative robot human-contact biomechanics. None of these standards address AI-generated motion programs specifically. ISO 10218-3, the proposed extension that would cover AI-driven robot control and code generation, is in pre-development as of 2026. Until this standard is published, manufacturers deploying AI-generated code in certified quality environments must construct their own validation arguments and document them separately from the standard compliance record.

Adoption Framework: Five Steps from Current Fleet to AI Augmentation

In practice, the transition from a conventionally programmed robot fleet to AI-augmented production follows a staged path. Attempting to jump directly to VLA deployment without the intermediate layers in place creates validation debt and higher failure rates than incremental adoption.

Audit the existing fleet: which robot brands and controller languages are in use, what proportion of programs are manual-taught versus OLP-generated, and what the average programming time per new part is. This baseline determines where Layer 1 LLM code generation delivers the fastest payback and which cells are candidates for Layer 4 AI-augmented OLP. Without this inventory, adoption decisions are made on assumption rather than evidence.

Select a controller language used across multiple cells (FANUC TP or ABB RAPID are the most common choices) and run a 60–90 day pilot with an LLM code-completion tool. Measure programming time before and after, track the number of AI-generated errors caught at the validation stage, and document the skill level required to achieve acceptable output quality. The goal is a validated time-savings figure and a clear picture of where human review is non-negotiable.

For welding or assembly cells with high part-mix changeover, evaluate a 3D scan-to-path or natural-language process generation system. Measure first-piece programming time, operator skill requirements, and defect rates on the first production run versus OLP baseline. For welding, verify that the system’s process library covers the relevant joint types and materials before deployment.

Identify one or two cell candidates where part variety is high (20+ distinct SKUs), tolerance requirements are loose (above 0.5 mm positional accuracy acceptable), and the cost of occasional failure is manageable. Build a demonstration data collection plan, typically 100–300 hours of teleoperation across the target task distribution, before beginning model training. Run the VLA layer above the existing deterministic controller; do not replace the safety-rated motion controller.

Based on pilot results from Steps 2–4, build a 24-month investment case that quantifies: programming labour cost reduction (Layer 1 and 4), changeover time reduction (Layer 4), GPU capex per cell (Layer 2), and the expected timeline to VLA production readiness for the specific application verticals in the facility. Include the cost of standards compliance work (validating AI-generated code against ISO 10218 requirements) as a line item, not an afterthought.

Layer Comparison: Production Readiness, Vendors, Cost, and Key Risk

| Layer | Production-Ready? | Representative Vendors / Platforms | Indicative Cost per Cell | Key Risk |

|---|---|---|---|---|

| Layer 1: LLM Code & Motion-Script Generation | Yes — in production at early adopters; validation step mandatory | GitHub Copilot (general), ABB RobotStudio Copilot, FANUC ZDT LLM assistant, Microsoft Robotics Copilot (research) | USD 0–5,000/yr (software subscription); no hardware add | Unsafe trajectory generation without formal verification layer; no ISO standard for AI-generated code yet |

| Layer 2: VLA Foundation Models | Pilot only — bin picking and loose-tolerance assembly; not validated for welding or tight-tolerance processes | Covariant RFM-1, Physical Intelligence π0, Figure AI Helix, Google DeepMind Gemini Robotics, NVIDIA GR00T N1 | USD 4,000–8,000 GPU hardware + significant data collection cost (100–300 hr teleoperation per task) | Stochastic inference exceeds process tolerances; no ISO 10218-3 standard; high data collection cost |

| Layer 3: Generative Simulation | Pilot / tooling phase — used as VLA training data supplement; not a standalone production system | NVIDIA Cosmos, NVIDIA Isaac Sim 4.5, Genesis (Tsinghua), DreamGen, RoboCasa | USD 0 (open source) to USD 50,000+/yr (NVIDIA enterprise); GPU compute cost additional | Sim-to-real gap persists; synthetic data quality depends on physics accuracy; ROI tied to VLA deployment which remains in pilot |

| Layer 4: AI-Augmented OLP / Natural-Language Teach Pendant | Yes — deployed in production for standard welding geometries, assembly, and high-mix changeover environments | ABB RobotStudio + Copilot, FANUC ZDT + LLM assistant, EVST EVS-AI welding system (3D scan + auto-path), Inrotech AdaptiveARC | USD 40,000–120,000 per cell (hardware + software for full 3D scan-to-path; LLM-only integration lower) | Process library coverage limits: complex multi-pass exotic-alloy welds still require manual OLP; WPS traceability audit requirements add validation cost |

Frequently Asked Questions

Which generative AI layer should a manufacturer start with in industrial robotics?

Layer 1 (LLM-assisted code generation) is the lowest-risk entry point. It plugs into existing OLP tools and controller languages without changing hardware or safety architecture. Documented time savings of 25–40% on robot program authoring are achievable in pilot deployments within 30–90 days. Layer 4 (AI-augmented teach pendant) is the second logical step, especially for welding and assembly shops with high part mix. VLA pilots (Layer 2) should be deferred until the simpler layers are stable and until adequate demonstration data — typically 100–300 hours of teleoperation — has been collected.

How do manufacturers verify the safety of AI-generated robot trajectories?

Current best practice wraps any LLM- or VLA-generated motion target in a deterministic safety monitor that enforces workspace limits, velocity caps, joint-torque limits, and collision-avoidance checks regardless of what the AI layer requests. ISO 10218-1 and ISO 10218-2 govern robot design and integration safety; ISO/TS 15066 covers collaborative robot contact forces. ISO 10218-3 (covering AI-generated robot code specifically) is in pre-development as of 2026. Until that standard is published, the SOTIF (ISO 21448) reasoning framework from automotive — defining an operational design domain and restricting AI operation outside it — provides a workable validation approach.

Does adopting AI-assisted code generation create OEM lock-in?

It depends on the implementation layer. LLM assistants integrated into vendor OLP tools (ABB RobotStudio Copilot, FANUC ZDT) are OEM-specific: the generated RAPID or TP code is portable, but the AI assistant itself is tied to the vendor platform. Open-source and third-party LLM tools that target multiple controller languages avoid this constraint. For VLA foundation models, NVIDIA GR00T N1 provides open weights, while Physical Intelligence π0 and Figure AI Helix are proprietary. Buyers should assess the portability of trained model weights and training data before committing to a closed platform.

When will VLA foundation models be ready for production welding applications?

The consensus forecast is 2026–2028 for limited deployment on standard weld joint geometries (fillet, butt, lap on carbon steel or aluminium), conditional on three developments: VLA inference reliability reaching uptime above 99% over multi-shift production; an ISO standard for AI-generated robot code that welding quality frameworks can reference; and adequate demonstration data collection infrastructure at the facility level. Heavy-geometry and exotic-alloy welding will likely remain on deterministic OLP-based systems through 2028 and beyond.

Who owns the robot trajectories generated by AI systems trained on customer production data?

This is unresolved commercially and legally as of 2026. The Open X-Embodiment dataset established academic data-sharing precedent under Creative Commons terms, but commercial production data operates under different constraints. When AI systems learn from customer trajectory data, contract terms between the manufacturer and AI vendor govern data retention, model improvement rights, and competitive use restrictions. Buyers should require explicit contractual clarity on data ownership, model rights, and data deletion at contract termination before deploying any AI platform that learns from facility-specific production data.

Related Reading

- Embodied AI in Industrial Robotics: How VLA Models Are Changing Robot Programming — architecture, benchmarks, and safety validation for VLA models in depth

- Embodied AI Data Collection: Teleoperation and Sim-to-Real (2026) — the demonstration data infrastructure that foundation models depend on

- Robotic Welding Offline Programming, Simulation and Digital Twin (2026) — four-layer welding software stack including AI teach-free systems

- Process Automation System — systems integration, safety standards, and commissioning frameworks

- Top Cobot Manufacturers 2026: Comparison — collaborative robot vendor landscape including AI-capability differentiation

Outbound references:

IFR World Robotics Report 2025 |

Stanford AI Index 2025 |

ISO 10218-2: Safety of Robot Systems and Integration |

NVIDIA Isaac Sim